Starting a greenfield project with microservices is easy. The promises are wonderful but often they are far from reality. This time I’ll share my experience on the truth about microservices when starting a project from scratch.

This is the second article in this topic. You can read the previous one here: Don’t start with microservices – monoliths are your friend.

There was a lot of feedback from the community, from you; sharing your experiences and turns out we have a lot in common in terms of the microservices experience.

Whatever I share in this article series is solely my opinion and the truth is, every environment / organization / project is different. You might make a really good use of microservices. You might have success in starting with microservices. I’ve never said you should apply my logic to every single situation because I honestly think there’s no silver bullet here.

The only thing I’m trying to say with this article series is the fact that we shall look behind the curtains to understand the implications of any architectural decision we take at the beginning of a newborn project.

As always, happy to hear your feedback and thoughts.

In the previous article, I covered the infrastructure requirements, faster deployments, organizational culture and the fault isolation aspects of microservices. In this one I’ll continue with the other de-facto promises we all naively read into microservices.

Easier understanding

I think this aspect of microservices is pretty self-explanatory… or not?

The key idea here is since you’re having clear and small responsibilities for individual microservices, it’s much easier to understand what the system is doing because you only need to look at a single microservice instead of the whole thing.

My thought here: this is straight up a false promise. I’ve seen microservice boundaries defined really well and it was quite easy to understand what a certain microservice was doing but I’ve seen bad examples too. Where a service was doing too much stuff at once or too little stuff; where functionalities were divided into multiple services creating this messy system where a single service failure could bring down the whole application.

And you can say, “yeah dude, you didn’t write microservices if that’s the case” or “yeah dude, you f**ked it up” and you’re not that wrong but the thing is, this happens to a lot of projects believe it or not. Not every team/organization/project is perfect and these things happen, probably more than they don’t.

On the other hand, monoliths can also be screwed up from this point of view. I’ve seen monoliths really really hard to understand because it’s spagetti, huge codebase, lack of tests, lack of docs, different programming styles and so on.

But you can achieve an easily understandable system with a monolith too. There’s no need for a microservice architecture to do that. How? Modularization. You structure the code in a way where you define similar boundaries you’d do for microservices but instead of running those “services”/modules as different apps, they are part of the monolith.

So for me, this propery doesn’t count as it belongs to microservices only. You can very well mess it up with or without microservices.

Scalability

“You can scale your services horizontally – i.e. starting up multiple instances of a service – to cope with the load”. Heard this so many times.

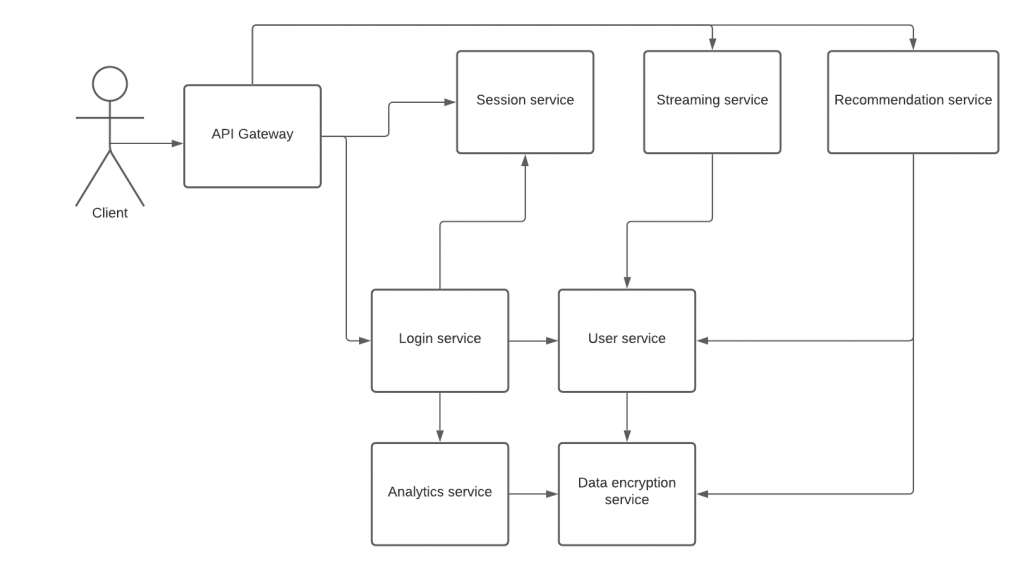

Let’s unfold the truth. Say, you have the following architecture (from the previous article):

Let’s say there are many users active in your app and you have to handle a lot of user sessions. So, you’d need to start up multiple session services.

If the session service is accessible through HTTP protocol, there’s a little difficulty with multiple instances. In case the API Gateway or the login service wants to talk to the session service, it has to invoke the HTTP API on it. With the HTTP API, you have to invoke a specific URL (host + port) that denotes the location of a particular session service instance. If multiple ones are started up, there will be multiple host + port combinations that the consumer services have to use.

One common solution to that problem is using a service registry or a load balancer. In case of a service registry, when the instances are starting up, they register themselves into some type of store where the location of the instances can be retrieved. And when a consumer service wants to talk to a particular service, it goes to the service registry first to retrieve an instance location and use that location to talk to a specific instance.

Another solution is using a load balancer. Instead of directly talking to a service, you use a middle layer, another service (i.e. the load balancer) which maintains which instances are accessible and proxies the communication. Here for example you can think of it as DNS load balancing or having a specific load balancer service like an AWS ALB.

So literally when you use HTTP for inter-service communication, you gotta deal with location resolution as well to address the proper service instance. There’s no free lunch.

If the session service is accessible through messaging, for the sake of my example I’ll use Kafka, then you need to deal with a whole other level of problems. Why?

Simply because with Kafka, you can start as many service instances as the number of partitions a topic has. Let’s say you have a topic of session-service-topic. The topic is read by the session service, obviously. Scaling on a Kafka topic depends on the number of topic partitions. One consumer per one partition. If we want to be able to scale the session service to 4 instances, we’ll need 4 partitions for the topic they’re reading from.

What’s the big deal right? We increase the partition count whenever we want to. Not wrong but you have to remember, the message order is only preserved within a topic partition. What does that mean?

Let’s say the session service handles 2 types of messages:

- UserLoggedInEvent

- UserActivityEvent

Upon receiving the UserLoggedInEvent, the session service will create an internal “session”, probably touch the relevant DB tables and so on. Upon receiving the UserActivityEvent, the session service should update the expiration on the existing user session, probably touch the relevant DB entries.

The question is, if let’s say there are 2 session service instances and there’s one topic with 2 partitions where both the messages are sent. The topic producer is using a round robin partitioning strategy, meaning one message goes to the first partition, the other the the second. Then, one service receives the the UserLoggedInEvent and the other one the UserActivityEvent.

What happens in this case is that the service receiving the UserActivityEvent might process it faster than the other one receiveing the UserLoggedInEvent. If they share the same database, this will probably be an issue because the initial session record is not yet written into the DB by the other service instance.

Sounds like a small issue but this could only get more complex. I’m not saying there isn’t a solution to this problem but like horizontal scaling isn’t there by default just because you use microservices. You have to work for it.

I’ve seen systems being really hard to horizontally scale with Kafka because it was enormously hard to figure out how to partition the data and solve ordering issues. Also, I’ve seen systems where to avoid all this they started to utilize a single Kafka topic for all inter-service communication without partitioning, throwing the scaling aspect out of the window. HTTP can also work well in certain cases.

The point I’m making here is: don’t expect microservices to be horizontally scalable by default. You have to work for it and deal with exotic issues. Have you ever tried to debug an ordering problem in a microservice environment where the issue only pops up in a regular dev/test environment and not on your local machine? Well, it’s not easy at all.

Technological freedom

My favorite so far. Fortunately or unfortunately I have first hand xp on this, mostly on how to abuse it just because developers are too creative.

The idea: when you’re in a monolith environment, you’re bound to the programming language and tech stack. If you’re using microservices though, you can write individual services in different programming languages and tech stacks. Say, you use Java for one service, NodeJS for another, Go for the third and so on.

I’d say this is one of the most easily abusable property of microservices. I’m absolutely not against innovation and I like innovative solutions to existing problems instead of creating new ones.

You might think what’s the big deal of a heterogenous microservice architecture. If you have teams being responsible for services and solely they are the ones touching them, probably it’s not that big of a deal.

But what happens when the organization you’re in is not that ready for adopting microservices, yet they’re trying to do it and individual teams are not responsible for a certain set of services but when developing new features, they touch whatever services they have to.

Let’s say you have a team developing the login service and it’s written in Java. Another team is developing the session service. At least this is the case for the project start. Everybody is super hyped for the product, motivated as hell and trying to solve the world’s problem with creative and innovative solutions. So the session service is written in NodeJS because there’s a brand new JS framework that just came out and everybody says it’s awesome, boosts productivity to 1000%, etc.

The MVP is built, the product is running in production and slowly over a couple of months, the builders of session service (the NodeJS service) are starting to transition to other projects, leave the company, whatever. In the meantime, for those couple of months there’s no functional development connected to the session service.

Suddenly the Product Owner says, “hey guys, we need this feature and extend the session functionality on our platform”. The original session service creators are gone. Another team, probably with no experience with the session service or NodeJS will need to pick up the work and solve it.

What do you think, how good code can they write without xp?

Another thing, DevOps. And I’m intentionally not going to cover my view on what DevOps is but I can recommend reading the The Phoenix Project by Gene Kim and Kevin Behr. A lot of organizations are hiring and building a separate DevOps organization next to the engineering one and guess what, DevOpses are not superstars either and they don’t know every language/technology specifics. They might just not be able to effectively operate all the services.

Also, what if the Product Owner or the boss of the Product Owner doesn’t care about the different programming languages. If you say building X takes 2 weeks – because the team is considering to build it in Java within the existing services they’ve built – and you say, a similar thing to X takes 6 weeks to build because the service is written in another language, will they care? I know this is not optimal and might say get out of the company but this happens and you can’t ignore it.

Another aspect, what happens to cross-cutting concerns in a heterogenous system?

How to standardize …

- logging?

- security?

- monitoring?

- internationalization?

- error handling?

You have to do all these things for the different languages and tech stacks which are often not that easy and takes a lot of effort from a team. I remember once we were dealing with 80+ microservices when the project was like 5 months old and we wanted to standardize API error handling to produce consistent HTTP codes and the estimate from a team of 5 was like 107 days. And the worst thing there, was the fact that it wasn’t even a heterogenous system. Everything was written in Java + Spring Boot yet it would’ve taken that amount of time. Imagine that for 3-4 different programming languages and tech stacks.

Where I think the possibility to use different languages/tech stacks really shines is when you actually need some property that the language or technology provides. For example I’d be totally open to use Go or NodeJS for low-latency things while for complex non-time critical things Java is a good choice.

And here goes my personal xp with a heterogenous architecture. The system was built on Java, Scala, NodeJS, Erlang and more, I think it was 5 languages at the end. Well, my team had to maintain all those at once and you can imagine how hard it was. There were different Java version, different frameworks and so on. It was just a mess. Turns out the reason behind the chosen languages was nonsense. They did it just because they felt like it and wanted to experiment.

I honestly think a successful product is made by the people building it and innovation is key. But, I also think you have to be careful when making these kind of decisions and be aware of the implications. From my point of view, the “I’d like to try this for no reason” is not a valid argument because it often creates this package you have to carry along the way, eventually slowing you down.

Scaling teams

This is the best argument for microservices. Imagine having a single team of 10 people working on the same monolith codebase. Works very well, minimal added complexity.

What happens when you try to multiply that and have a 100 people? Same codebase, same everything. They push code to the same git repo, they touch the same classes, same tests, working on 10 different features.

Conflicts will happen and people will step on each others’ toe quite quickly.

Microservices are one way to extract the work of teams out of a single codebase into separate ones offering the possibility to work in parallel without making problems for others.

I don’t have the magic developer number when it’s better to use microservices but I know this, it depends on the organization you’re in. You can screw up software development even with 10 people if the manager doesn’t care about the team/project or if there are continuous roadblocks from other organizational parties. Microservices won’t help there for sure.

Takeaways

I’m the very last person who wants to tell if you should go with microservices or with a monolith when starting a new project.

My personal opinion is we definitely can’t avoid making this decision at the beginning. I believe around 75% of the new projects can easily go with a monolith, taking advantage of proper modularization so later on when the time comes and there’s a need to transition into microservices, the switch is much easier compared to having a spagetti monolith.

The rest is probably good to go with microservices and I’d choose it too but only if there’s a reason for it. Blindly I’d never choose microservices over a monolith.

You gotta be aware of the product’s expected first 1-2 years, what kind of/how many people are going to do the job, what infrastructure constraints you have, the budget you have, whether the product idea is detailed enough. Putting these together will form the decision.

If the product is a regular end-consumer web-app, for example a store and it’ll take like 5000 users, 100 orders per month for the first 5 years, then probably it’s a bad idea to go with microservices. If the team is going to consist of junior engineers with a single senior developer, probably it’s not a good idea to go with microservices either. If the budget is really small for the project, you’ll end up with a messy system that noone wants to maintain.

Microservices are hard even though they look easy. Trust me, you didn’t born with the capability to write microservices perfectly, we all need to learn it and polish our skills around new concepts. Monoliths done right can also be very difficult but probably it’s more natural to start with. It’s not like you don’t have to learn about modularization, testability, separation of concerns and stuff like that. Both approaches are hard.

Follow me on Facebook/Twitter if you feel like.

Nice article. You have the reason as to why (micro)services are misguided, oppinionated, technocratic religion under your 2nd headline.

Services are claimed to provide “clear and small responsibilities”. Well, whatever SOA approach is chosen, there is no formal methodology that defines the premisses for how to do the separation. In other words, it’s impossible to do a formal, fact based reasoning regarding if a certain service partitioning is good or bad. One can only state oppinions. Thus, stating that services shall be “micro” is prejudgmental and misguided.

It’s a difficult subject and most SW-developers seem to be clueless about this. Not even Eric Evans (Domain Driven Design) got it right.

More on this subject can be found on e.g. Wikipedia by searching for “Separation of concerns” and the pioneering genius to look up Edsger Wybe Dijkstra (https://en.wikipedia.org/wiki/Edsger_W._Dijkstra).

Thanks Nils. It’s definitely a hard subject.

> ” I’m the very last person who wants to tell if you should go with microservices or with a monolith when starting a new project. ”

https://arnoldgalovics.com/microservices-in-production/

> “Don’t start with microservices in production – monoliths are your friend”

I’m not saying you’re wrong, I just chuckled when I saw this 😅

😀 Well..

> “Another aspect, what happens to cross-cutting concerns in a heterogenous system?”

What are your thoughts on how to work around this? I always see this as a big pain point when using different technologies. When using one language, you can have versioned libraries for these concerns for example. But with X different technologies, it get’s really difficult as you have to manage it in X different technologies.

Jumping in here.

I find that the apperance of cross-cutting concerns to be a really good indication that the particular perspective/approach taken for the SW-architecture (like SOA) has reached the end of the road. Another, additional approach or architecural building block is needed in order to capture the cross-cutting concern in a clear, non-cross-cutting and efficient way. A SW-architecture is massively multidimensional and SOA is to me a typical 1-dimensional Birmingham screwdriver (i.e. a hammer).

Hi Julian,

A very good question you have there. Honestly I don’t have an answer for that. This is why I prefer homogenous systems over heterogenous ones. The additional work & complexity other languages and technologies might add – in my opinion – usually outweight the benefits (if any).

That’s why I believe in (and I mentioned this in the article too), use a tool to solve a specific problem. If you feel like experimenting with a new tech, good but you don’t necessarily need to put it into production. If you need a high-throughput low latency API gateway because the product needs it, it’s a good candidate to replace with something else than Java for example.

I’m always careful with choosing the tech stack since I’ve experienced how hard implementing cross-cutting concerns could be even in a homogenous system and probably I’m a little biased.

I can only repeat myself, every decision you taking during product development has to have a very good reason. Otherwise you’re overengineering.

Hope that explains to some degree.

Arnold

You seem to have a very fundamental misunderstanding of serverless architecture (and REST as a whole).

Session state is just that: a state. You don’t need, or want to broadcast it.

DB race conditions are not applicable. Please read up on your current tech stack for this, but if it is an issue you have bad architecture.

Logging, security etc? Guess what, AWS and Azure do this for you – and they do it better.

I’m sorry, but this article screams of a junior developor unwilling to move on

Hi Bryn,

> You seem to have a very fundamental misunderstanding of serverless architecture

Probably you’re confused a little bit because I haven’t even mentioned serverless.

Cheers.

Arnold

Great post except for only this part:

“What happens when you try to multiply that and have a 100 people? Same codebase, same everything. They push code to the same git repo, they touch the same classes, same tests, working on 10 different features.

Conflicts will happen and people will step on each others’ toe quite quickly.

Microservices are one way to extract the work of teams out of a single codebase into separate ones offering the possibility to work in parallel without making problems for others.”

You can still have 100 people touching the same classes in the same microservice. As you can have good cohesion and have separate classes for every module in a modular monolith.

We need to be careful to not compare the correct application of microservices with the incorrect application of monoliths.

Just to clarify. You can have a modular monolithic codebase that can scale to millions/billions of users/requests and hundreds of thousands of developers, as much as you can have an internal distributed system that does the same.

Very useful for understanding expectations in developed markets